How I tried to get into game development and failed, part 3

Final part of my story about game development. First part, second part.

Client side load balancing

In the previous post, I wrote about performance optimizations. We figured that a single 1 CPU server in Azure could handle up to 600 simultaneous users spread across 3 game scenes (also known as game arenas; each scene takes one OS process).

Now we needed a mechanism for distributing the players across multiple servers and game scenes in those servers. There were several requirements for this mechanism:

-

When there are multiple servers available, each player needs to be connected to the closest one to reduce lags.

-

Within each server, the load has to be distributed evenly across game scenes.

-

If a game scene goes offline, it should be taken out from the rotation.

The user distribution was a pretty simple task overall because all game scenes were independent of each other and there was no shared state between them. So no replication or other hard to implement stuff, we just needed to direct the user to the least loaded game scene within an available server.

One option that came to mind was to introduce some front-end server on top of all existing servers that would somehow know about the availability of all game scenes. That, however, would introduce a single point of failure. Which would require quite a bit of work to mitigate: we would need to have multiple replicas of that server, connect them using some sort of a load balancer, etc.

There was a much simpler and cleaner solution, though. Our client was written using HTML and JavaScript, it was essentially just a couple of .html and .js files. And that meant we could use Amazon S3 to host our entire client! Which is precisely what we did. We’ve introduced the load balancing logic to the client itself, and S3 gave us great availability so that we didn’t need to worry about the single point of failure anymore.

Moving forward, we went even further: we’ve put CloudFlare in front of our S3 files. It’s free and provides a CDN functionality - exactly what we needed to reduce the game’s page load time.

Implementation-wise, it was pretty straightforward too. We modified the game so that all arenas in a server wrote information about their states to files on the disk. And we also introduced a ping server - a new separate OS process that read those files and decided which game scene direct new users to.

Each server had a single ping server running and the client application had a separate file with IP addresses of all ping servers. When starting up, the client queried each of them and measured the response time. The response itself contained the IP of the game scene the ping server selected for that client. The client then connected to the server that replied first.

If we needed to introduce a new server, we just updated the file on the client. And if we needed to add a game scene to a server, we started up a new instance of the game (a new OS process), and the ping server automatically added it to the rotation.

We also added monitoring functionality so that we could see in real time how many users are present in game arenas across all running servers. Ping servers sent the current arena states to an Azure queue, and a simple Angular app picked them up and displayed to us. We also used this mechanism for error reporting. All errors from all game arenas were logged and sent to a central storage (we used Azure Table for that).

Overall, this implementation worked very well. We modified it a little bit afterward but the general mechanism remained the same. As for the modification - we introduced a cap on the number of users online. As the size of the game arena was static (it could not be changed without restarting the arena), we needed to maintain a certain density of the players because otherwise, it would be too crowded in that arena. And the opposite was true as well. If there were too few players online, there was no point in distributing them across several game scenes, it would be better to keep everyone in a single arena.

So what we did was we made some changes to the ping server. Even if there were several arenas up and running, the ping server used only one of them up until the number of players hit some limit, after which the ping server added another arena to the rotation.

New tanks

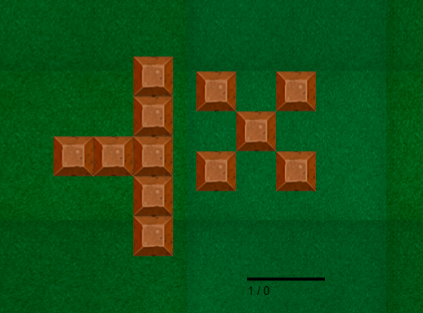

Meanwhile, we slightly modified the way the walls spawned on the map. Instead of single walls, we now had them appear in nice looking clusters:

And the designer created two more tanks and changed the texture of the wall to concrete:

Traffic optimization

Alright, that was the end of December. We slowly moved towards the release. One of the big items left was traffic optimization.

During the whole development period, the client and the server talked to each other using JSON. Which is obviously not the best option as it’s too verbose and introduces a lot of overhead in terms of the traffic use. And we knew that the traffic would become one of the most expensive items for us should we not take care of this issue.

Luckily, the WebSockets technology allows for both text and binary options and so the only thing we needed to do is come up with our own protocol to replace the JSON.

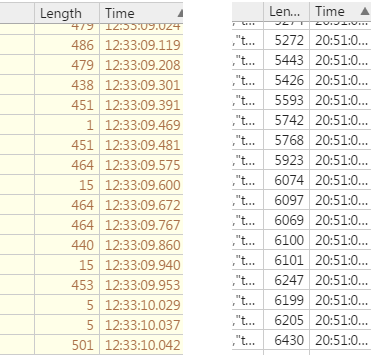

And that’s what we did. We created a set of serializers and parsers for all messages going between client and server. They converted the messages into byte arrays instead of JSON. The result was great. New messages took about 12 times less bandwidth comparing to the previous version:

On the right, you can see the length of JSON messages that transmitted the state of the game. On the left, there are analogous messages sent using the binary format. Smaller messages are for commands the client sent to the server; larger ones contain game arena updates the server sent back to the client.

It’s interesting that this functionality - serialization and deserialization of binary messages - is the only part of the game that we covered with unit tests. It was really easy to do so: we just needed to serialize a message with one class and then parse it back with the other and check if the result was equal to the original. And we got tremendous benefits out of doing that too. It’s easy to mess up when you manually convert strings and integers into bytes. Having those tests in place saved us a lot of headaches.

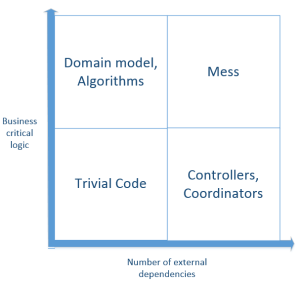

The tests essentially fell into the upper-left corner of this diagram (taken from this article):

They don’t have any external dependencies and thus are simple to write. And they cover important business logic and therefore are extremely valuable.

As for the other parts of the code, we decided that it wasn’t worth it to create tests for them.

Beta testing

Along with the traffic optimization, we added a couple more features. Such as the minimap:

It displayed your position (large while point), positions of all tanks in the arena (small white dots), and also the position of the leader of the arena (large gold point).

Actually, we added this gold point later, after the release. It introduced a lot of tension (and fun) to the game as now you could easily find the current number-one of the rating and shoot him :) Which I did quite often myself.

But let’s not break the storyline. Closer to the mid of January 2017, we decided to release a beta version of the game and get feedback from our friends. So we deployed the latest version to staging and sent a link to a few people.

The beta test revealed some issues with lags. But to explain them, I need to step back for a moment.

Remember I wrote in Part 1 of this series that the game can mitigate problems with lags. To do so, it works with the timing of the incoming messages. For example, if you want to change the direction of your tank, you press a button and the server receives a command from you. It then calculates your lag as the difference between the message’s timestamp and the actual time when it received that message. After that, the game rewinds the state of your tank back in time for the duration of the lag, applies the command, and rewinds your tank’s state forward again.

For you, as a player, it looks like a great experience. You play as if there were no lags whatsoever. However, the rest of the arena would see such rewindings as unexpected changes in your position. They are not noticeable when they are small. But the larger they are, the more quirky they look.

And so there should be a balance between your well-being and the experience of all other players. The game maintains that balance by imposing a limit for the extent of lags it compensates for. If the lag between the server and the client is more than 200 ms, the game will rewind the time back only for that 200 ms limit, and the client will have a correction of its local state with the next update from the server.

So the problem was that when the lag spiked to more than 200 ms for some reason, the resulting correction looked horrible. Every object on the game scene just flicked rapidly from one position to another.

We fixed that by moving all objects gradually. Now if you experience a lag, the game feels like your tank is stuck in mud. Which is much better than flickering.

Another issue was that, over time, the client clocks went out of sync with the server. We fixed that by doing periodic clock synchronizations during the game.

Approaching the release

So we took care of the issues that came up during the beta testing and started preparing to the release. Added an uglifier for the code on the client, updated input validation rules so that the client couldn’t break the game even if they knew the details of the binary protocol, and some more.

The designer started to become a bottleneck. She worked hard but still fell a little bit behind the development. Finally, she completed the remaining tanks. And she also colored them for visual variety. Here’s what we had by that time:

On this picture, you can see all the 6 tanks colored in different ways.

We made some final optimizations. Combined existing game art into larger images so that the client didn’t have to open lots of connections to the front-end to download them all. Made the client start to download the pictures on the background right away, even when the user didn’t yet click on the "Play" button. And the same for the search of the most appropriate game arena: it started automatically in the background.

I must say that we paid a lot of attention to details, even small ones. Everything to make the game more enjoyable and fun. For example, smooth tank rotation (I personally experimented with the angular velocity so that the rotation looked as nice as possible), slight glow over bullets to make them brighter, and so much more.

One of my favorites is the death screen that appears after you are killed. It shows you what happens on the battle field for the next 5 seconds while slowly fading away to the login screen. Imagine seeing the enemy blatantly picking up a gold star that appeared after your death. It would make anyone want to go back and avenge themselves.

Now that I’m going through the list of our git commits, I recall this feeling of utter satisfaction of solving one hard problem after another (and there were tons of them in this project). It was literally a sense of flow that lasted for 6 months.

Release

By the end of January, we completed the final touches, put together the main page, and found a good hosting provider. As I mentioned previously, the only two things we needed from the provider were a decent CPU and a good network channel. Cloud - be it AWS or Azure - wouldn’t suffice here because they charge extra for the traffic. And with our bandwidth usage, that could easily be hundreds or even thousands of dollars per server.

The provider we found offered a nice 2 core CPU server with 10,000 GB data included for only $60 a month. Which was exactly what we needed: a lot of traffic and a decent CPU.

Finally, on February 4th, we released the game:

We bought the domain beforehand, in November 2016. I liked it because it’s only 4 letters long, sounds good (at least to my ear), and is simple to remember.

You can go ahead and play the game but keep in mind that the current version is a couple months ahead of what I’ve been telling you so far about it. It contains more features and more tanks which I write about below.

After the release, we experimented with the game balance. The first version was a little less dynamic than we wanted. That’s because, in Version 1, we made the bullets too slow. The fire rate was also too low to my taste. We increased that and the game started to feel much better.

I would say that after a couple of attempts, we got a really good balance with our first release. A player with a decent skill could compete against the arena leader even having a weak tank. Which I did repeatedly. One of the funniest activities in the game was to upgrade to Level 2 and go after the arena leader who usually had the best tank by that time. In about 50% cases, I killed them off.

Note that we changed the balance since then, and going after the strongest tank having the weakest one most likely wouldn’t work now. The reasoning behind this change and the details about the change itself are below.

After the release

After the release, our first priority was to bring players to the game. We had no idea how to do that and so we started trying different options.

First off, we bought some traffic on Google AdWords just to test how this would work for us. It didn’t. The conversion rate and the price per click were too high for a free online game.

Then we found some cheaper option where you could buy random traffic in bulk for much less buck than in AdWords. With it, we finally got our first few players. People randomly entered the game, played a little bit with bots, some stayed for longer but most quit. The result wasn’t non-existent like with AdWords but was close to it.

I remember sitting in front of my monitor and constantly looking at the stats. If someone joined the game, I was like "Hey, let’s find this person among all these bots populating the arena!" Sometimes, I did and sometimes the person left the game before I could do that. We thought about differentiating bots from real players on the minimap but decided not to do that.

On the next day, we found several aggregators of io games. Those websites had auditory relevant to us and so we submitted bist.io to one of them. The things started to take off. We finally got quite a few users who played the game long enough to displace bots from the top lines of the game’s leaderboard. On February 5th, a day after the release, the game set a record of 10 simultaneous players online.

We created Twitter and Facebook accounts for the game and added social media buttons so that people could follow us and share the game. Of course, no one cared enough to do that.

As we submitted the game to more aggregators, the number of players started to rise. Next week after the release, we achieved a new record - 25 simultaneous players. Checking stats became addictive. There was a point where I looked at the monitoring website each 10 seconds to see if there are more people coming in.

Playing the game was fun too. Tank duels lasted anywhere from a couple of seconds to a couple of minutes, and if your enemy was sophisticated enough, the battle could be very intensive and rewarding (if you won of course). The first week or two after the release we spent at least as much time playing the game as developing it. Fighting with bots was nowhere close to the challenge of going after real users.

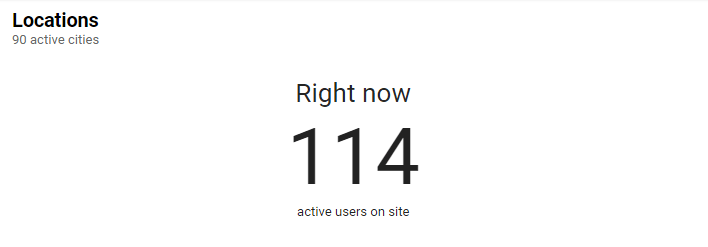

In a couple of days, a famous Youtuber recorded a video about the game and we set an all-time high of having 110 simultaneous users online and about 13K visits that day:

We haven’t repeated this result ever since.

We started to think about more creative ways to spread the word. One thing we tried to do is encourage people to share the game on social media. And so we introduced this feature with flags. You could customize your tank by selecting one of the several available flags but to do so, you needed to share the game first:

The feature itself came out really nice looking. The flags turn naturally, mimicking the tank’s movement and fade over time when the tank stands still:

To implement it, we took the double pendulum problem and adjusted it for our needs.

The bottom line didn’t look as great, though. Some people started posting the game on Twitter and Facebook but not nearly as much as we hoped for.

We also made the game mobile friendly by adding touch pad support. Now you could move the tank by touching the left side of the screen and navigate the fire with the right side:

We also planned to release a mobile version for iOS but never made it through. The release itself would be pretty straightforward, though, we already completed the major part by introducing the mobile support.

By the mid of February, we started working on monetization. We tried to join Google AdSense but they had some very strict requirements we couldn’t get around, and so we decided to go with an AdSense partner. More on the financial results below.

We also made a ton of little changes here and there in February. For example, a new dual-turret tank that you saw on the video above, a new logo for the game:

, a system that allowed us to gradually close out game arenas in order to push new a version with updates, etc.

We also added a team mode where you could share a link with a friend and form a team and introduced localization of all captions and images with text. Although, we only translated it into Russian.

I also recorded a video for promotion purposes. This is one of those moments where I went after the leader of the arena and shot them. Twice :)

By the mid of March, it became clear that the game’s user base is stagnant. It didn’t grow; even worse - it slowly declined. Also, we almost fully relied on the users that came from the aggregator websites, bist.io had a very small base of its own. As the game faded further down in the listings of those aggregators, so did our audience.

It could be fine if the game made some decent money, but it didn’t. We were getting about $250 a month from ads. Which was enough to pay for the servers but not closely enough to justify the labor of two programmers.

We decided to look at similar games to see what was in them that we could adopt in ours. After some research, we prepared for one final push: we were going to re-balance the game completely.

We came up with a brand-new progression system and the notion of tank classes.

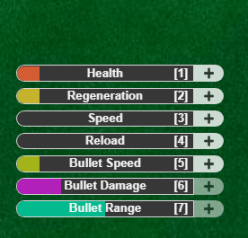

The progression looked like this:

Now you could spend collected stars on advancing one of the characteristics of your tank. And after the tank of level 3, you could select which way to go further:

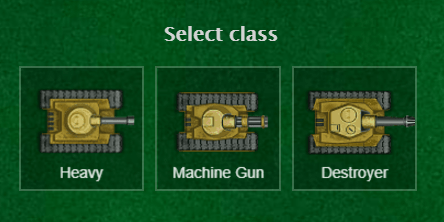

We’ve made 3 tank classes:

-

Heavy was the same set of single and dual-turret tanks we had before.

-

Machine Gun did less damage with each bullet but had a good fire rate. With the Bullet Damage upgrade maxed out, your tank could become a Chuck Norris of all tanks.

-

Destroyer, on the contrary, had a slower fire rate but with the latest tank of this class, you could kill off anyone with just a couple of shots.

The reasoning behind this update was that it would encourage people to stay longer in the game in order to advance their tanks. We hoped it would increase the retention. And people did stay longer. It now took more time to get to the last level and with this choice in place, we got a bit of replayability.

To offset the longer time in the game, we made it so that you don’t start over when you get killed. The game remembers your progress for up to one day, so if you leave and come back within 24 hours, you will get your tank back with all upgrades.

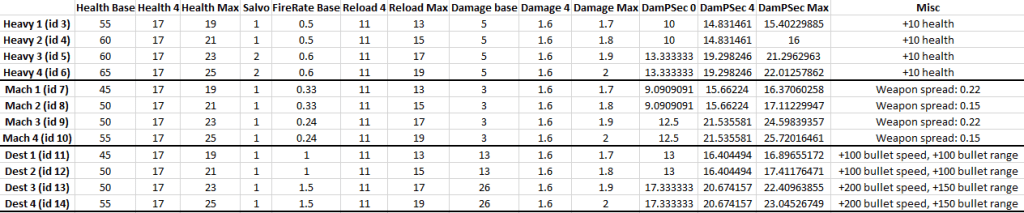

Figuring out the balance between all those classes with all possible upgrades was a challenge too. We ended up with a whole spread sheet of formulas that helped us calculate the damage each tank should make in order to remain competitive towards other tanks:

By the beginning of April, the game still had about 7K daily visits but that wasn’t nearly enough to make this enterprise profitable. And the stats had a clear decline trend. At the time of writing this, bist.io has about 2K visits a day and brings about $130 dollars in revenue monthly.

Final thoughts

We tried every marketing option we could. Now, after all those trials and errors, I understand that the only way such a game can have significant traction is if enough YouTubers posted videos about it. This is by far the only way to get sufficient user base to make the game profitable. And we failed to convince YouTubers to do that.

I would identify two reasons for this failure. First, it wasn’t good market fit, to put it in startup terms. Most users seem not to share our views on what constitutes a fun game. And second, it was bad timing. The number of io games was already rising when we started the development. By the time of the release, there were tens of them on the market already. I’m pretty sure that should we release the game just one year earlier, the results would be a lot different.

It’s interesting that, although the game turned out to be a failure from the financial standpoint, I would say that the underlying code base, on the contrary, is a masterpiece. And I have pretty high standards when it comes to evaluating code bases. We hardly had a single bug during the whole development process, despite the low test coverage. New functionality came out rapidly. In fact, even when developing this last chunk of functionality with tank classes and upgrades, it’s the designer who was the bottleneck, we could ship it much earlier should it depend on us exclusively.

The server code is stable too. Just checked the uptime - the game is up and running for 3 months without a single reboot already.

It was good 6 months and a fun experiment overall. Although we haven’t achieved the goals we’ve set, we’ve got a good experience out of trying to. And hey, I have this story to share with you, so that’s not bad either.

I will write my dream game eventually. Probably not in the near future, though.

- ← How I tried to get into game development and failed, Part 2

- Always valid vs not always valid domain model →

Subscribe

Comments

comments powered by Disqus